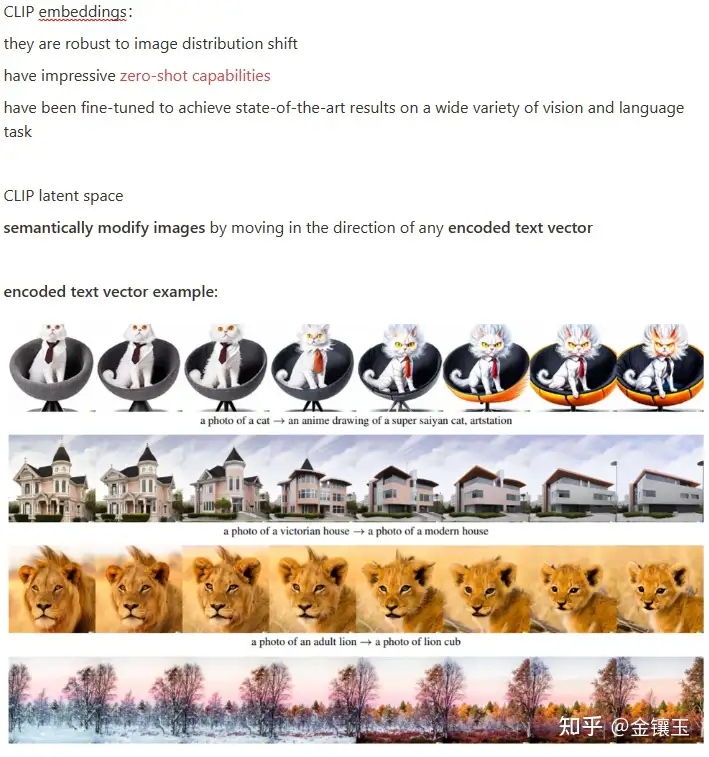

AK on X: "Visualization of reconstructions of CLIP latents from progressively more PCA dimensions (20, 30, 40, 80, 120, 160, 200, 320 dimensions), with the original source image on the far right.

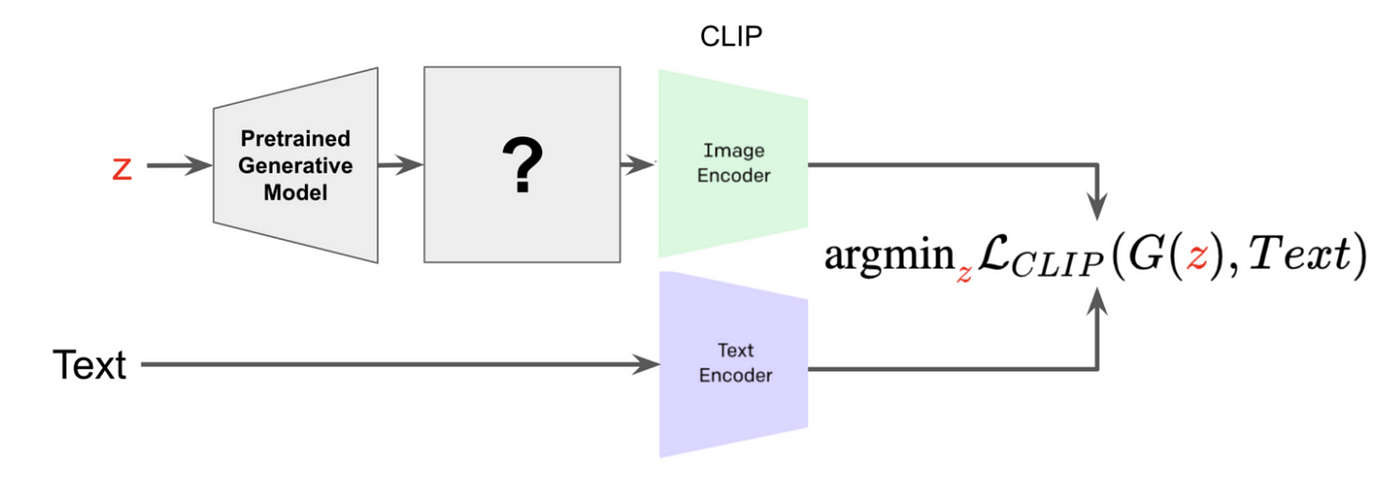

Left) Overview of our proposed CLIP-guided latent optimization to find... | Download Scientific Diagram

OpenAI's DALL-E 2 paper "Hierarchical Text-Conditional Image Generation with CLIP Latents" has been updated with added section "Training details" (see Appendix C) : r/bigsleep

CLIP Text Embeddings. This plot shows a TSNE of CLIP's pooled output... | Download Scientific Diagram

![MosaicML, now part of Databricks! on X: "[4/8] Speedup 2: Precomputing Latents. The VAE image encoder and CLIP text encoder are pre-trained and frozen when training SD2. That means we can pre-compute MosaicML, now part of Databricks! on X: "[4/8] Speedup 2: Precomputing Latents. The VAE image encoder and CLIP text encoder are pre-trained and frozen when training SD2. That means we can pre-compute](https://pbs.twimg.com/media/Fu0UET1aUAYD7xI.jpg:large)

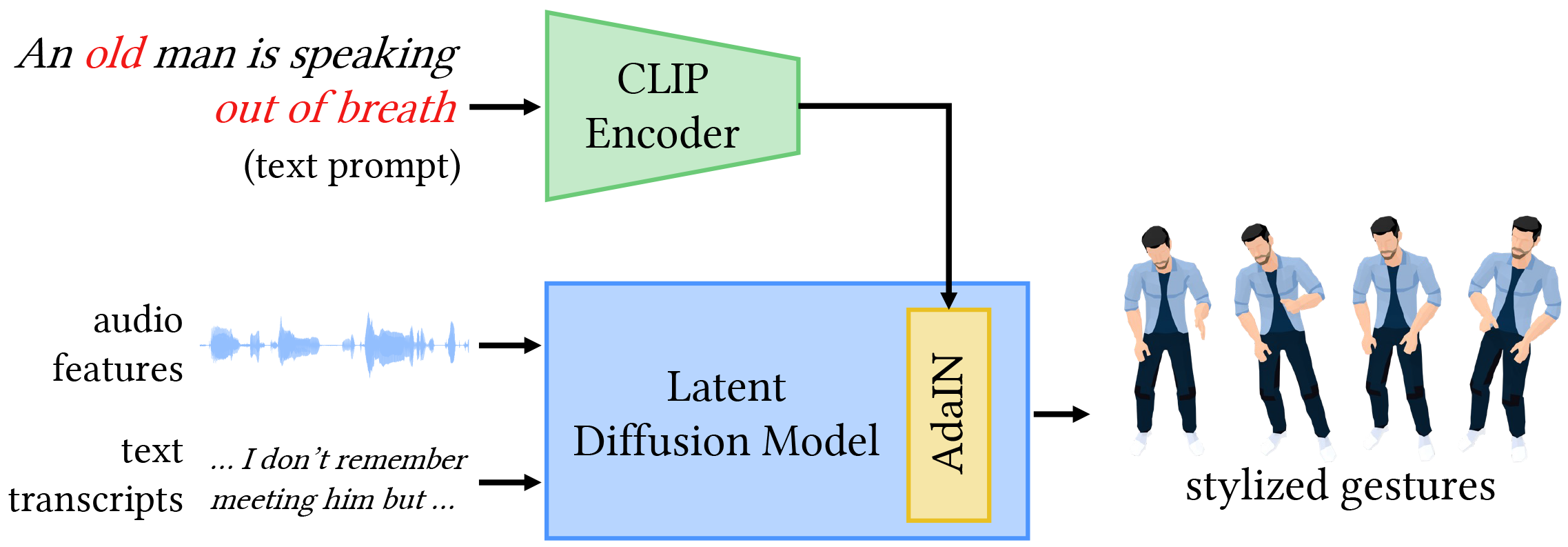

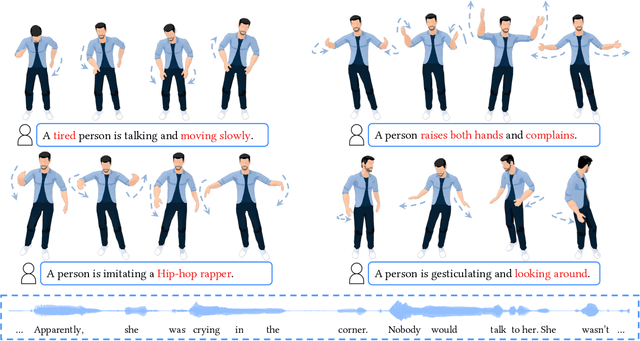

![Old Version] GestureDiffuCLIP: Gesture Diffusion Model with CLIP Latents - YouTube Old Version] GestureDiffuCLIP: Gesture Diffusion Model with CLIP Latents - YouTube](https://i.ytimg.com/vi/513EONcXOck/hq720.jpg?sqp=-oaymwEhCK4FEIIDSFryq4qpAxMIARUAAAAAGAElAADIQj0AgKJD&rs=AOn4CLDgnb8uZJm4MdIIg6KhJfcVHk-gWA)